By Andrew Buss

At the Intel Developer Forum 2012 in San Francisco, Intel looked to deliver on its vision of transforming from a provider of server chips to being an infrastructure technology provider as it made a number of announcements that look to break down some of the barriers that have served to create isolation and fragmentation across the whole of the IT infrastructure stack.

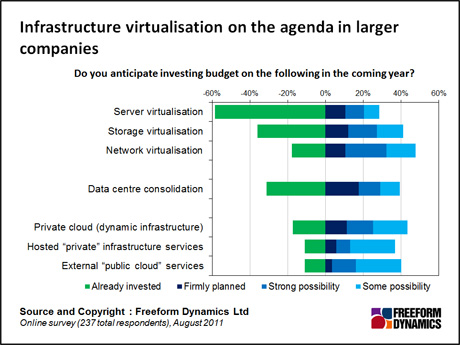

This is an important shift for Intel, as although investment in servers will continue to be important, attention is shifting towards getting the other core elements of IT service delivery to play their part and hence there is much more investment starting to go into the virtualisation of storage and networking (Figure 1).

Figure 1

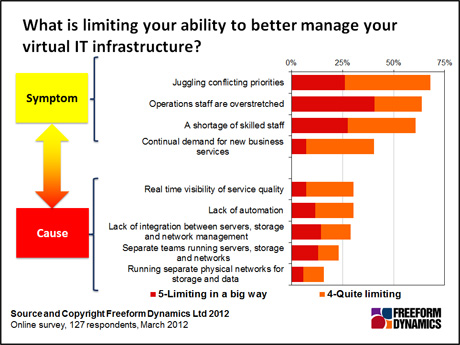

From our point of view at Freeform Dynamics, this is generally a good thing, but unless this investment is thought through and the wider context considered, it may not deliver all of the benefits that could be obtained. A little while back we looked at the challenges that many companies face in getting their servers, storage and networking working well together.

Many, although by no means all, of the issues are caused by a failure to recognise the need to invest in infrastructure integration and management to create a shared IT service delivery platform. Developments that bring these elements more tightly together while removing the need for explicit, often expensive, investment is a good thing (Figure 2).

Figure 2

On the compute side of the stack, Intel is seeking to continue to advance in its core market of microprocessors. This includes not only introducing new generations of CPU core with better overall performance, but also enhancing the capabilities when it comes to virtualisation, management and reliability.

These developments reduce many of the barriers to further virtualisation of workloads, further increasing the pressure on the rest of the infrastructure to evolve in order to keep up. Therefore it’s no surprise that Intel has shifted its focus to both storage and networking.

Because of the utilisation and flexibility challenges inherent in direct attached storage, disks moved out of the servers and into Storage Area Network. While useful, there tended to be a number of different, usually incompatible, products or solutions utilised on SANs which led to silos of capability. The end result has been pockets of shared infrastructure rather than a completely flexible Cloud-like storage.

This has led to storage architectures evolving. From a situation where custom chips and proprietary architectures were common, and indeed essential, for the storage networks to function effectively, there has been a marked shift towards using more commodity components, even though the products themselves still tend to be quite specific to the vendor. But this means that most storage networks are a mix of heterogeneous products that are difficult to pull together.

Intel recognises this and is working on optimising the low level components so that many of the higher-level design features that have separated the storage hardware product lines can be effectively eliminated. More hardware dependencies will be able to be eliminated, allowing software and management to enable much more flexible storage pools.

This will take time and investment – not to mention the co-operation of key storage vendors and partners, but is going to be a key factor in the ability of Cloud-like architectures to make better effective use of storage assets.

This brings us to networking, which for a long time has been dominated by a select group of vendors. When computing was physical, networking was a completely separate infrastructure touched only by the physical network adapters installed in the server.

With the advent of virtualisation and cloud infrastructure, networking has moved within the server with switching capability supported as part of the hypervisor. Not only that, but the demands being placed on the network by dynamic workload management and Cloud architectures mean that the network needs to be an active participant in the whole stack.

These dynamics have led Intel to focus on the virtualisation of the communications infrastructure as the next frontier of large-scale systems architecture and design. The two key enablers from Intel’s perspective a set of commodity hardware components that are freely available to purchase coupled with an open software stack.

With the acquisition of Fulcrum Microsystems in 2011, Intel gained a world-class provider of networking silicon that will enable it to provide high-end networking functionality for a significantly lower price than has typically been available to date. It is working to optimise both these chips and the Xeon CPUs and supporting chipsets to provide a network stack that is open, flexible and extensible.

Power is nothing without control, as they say. And this is the same for networking. The silicon building blocks would not be very valuable if each vendor had to invest to build their own custom networking software. To get around this, Intel is therefore also working to develop a networking stack that customers can implement.

This software will not be limited to product specific code: Intel, along with the rest of the networking industry, is focused on developing a Software Defined Networking – or SDN – stack that can be utilised within a Cloud architecture across a variety of products from a spectrum of manufacturers from the network through to the server.

Now, much of this investment will take time to come to market and be adopted. However, as the concepts do appear and are adopted, they will start to erode some of the barriers to closer knit IT integration across servers, storage and networking allowing more of the vision of Cloud computing to be realised without the heavy lifting of today.

CLICK HERE TO VIEW ORIGINAL PUBLISHED ON

Content Contributors: Andrew Buss

Through our research and insights, we help bridge the gap between technology buyers and sellers.

Have You Read This?

From Barcode Scanning to Smart Data Capture

Beyond the Barcode: Smart Data Capture

The Evolving Role of Converged Infrastructure in Modern IT

Evaluating the Potential of Hyper-Converged Storage

Kubernetes as an enterprise multi-cloud enabler

A CX perspective on the Contact Centre

Automation of SAP Master Data Management

Tackling the software skills crunch